“ AI must be transparent and accountable ”

Hans van Ditmarsch has been appointed Professor of Artificial Intelligence at the Faculty of Science of the Open University (OU). It is based on the ideas of Virginia Dignum: AI must be transparent. So that logarithms can be checked for bias, for example. A conversation.

Van Ditmarsch (1959) worked from 1989 to 1994 at the Open University (OU) as a course team leader, after which he was transferred to the University of Groningen. From Groningen there was still regular contact with the OU. There he completed the propaedeutic phase in business administration in 1995. From 1996 to 2000 he worked at the University of Groningen with the UO in the BOK project (Broad Educational Innovation Knowledge Technology) and in 2009 he co -wrote the OU Logica in Actie course. In 2000 he obtained his doctorate from the University of Groningen, after which he started working as an assistant professor at the University of Otago (New Zealand) and the University of Aberdeen (Scotland). He was a senior researcher at the Universidad de Sevilla (Spain).

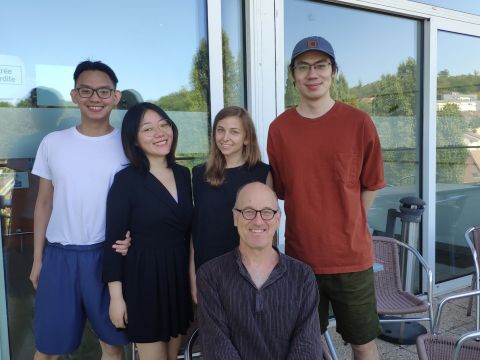

Since 2012, he has been a senior researcher at the National Center for Scientific Research (CNRS) in France, according to the French NWO, where he is stationed in Nancy at the Loria research institute and therefore also affiliated with the University of Lorraine. This French position will end on June 1st.

IA manager

Within the OU group ai, underlines Van Ditmarsch, it is Martijn van Otterlo who will teach ethical aspects of AI. “ The intention is that in the UO’s AI Master, a responsible AI course be introduced, based on the book with this title by Virginie is worth, which is affiliated with Umeå University in Sweden and TU Delft. I am also in contact with Virginia, as I am an AI @ Umeå scholar, ”says Van Ditmarsch.

Dignum explained to the European Commission why ‘responsible AI’ (responsible use of AI) is needed and how to achieve it. In his contribution describes the risks of AI: prejudice and prejudice, discrimination, devaluation of human skills, lack of control, loss of self-determination and deprivation of human responsibility. On the other hand, there are benefits in the areas of healthcare, climate change mitigation, communication, education and work.

Responsible

Is “responsible AI” possible? “Responsible behavior is always possible,” Van Ditmarsch replies. “You learn this from your parents, your family, your friends and at school. And at university: In a course like Responsible AI, students have the opportunity to apply technology responsibly and develop technology responsibly. With “ responsible AI ” I also imagine something else that does not mean at all: teaching students to carefully cite already published work, not to make double submissions at various conferences, to support comrades and other researchers, too. , etc. It is not at all clear how ‘you should’ behave in an academic and long-term environment to be seen as someone who contributes to the community ”.

The question was whether you need fast computers or just “desktop PCs” to develop responsible algorithms. Van Ditmarsch replies, “I don’t think fast computers are necessarily necessary for responsible AI. But it depends on the application. I remember a discussion that facial recognition is banned unless it involves criminals or terrorists. Easier said than done. Facial recognition for limited target groups does not require a supercomputer, but such an additional ethical layer seems to me to be a data science problem. Maybe it requires fast computers. On the other hand, when it comes to adapting algorithms, in which the user is presented with additional decision moments, this can be done on any PC.

Not so much facial recognition, but so-called eye tracking (and its use for cognitive issues), the IA group at UO has another new hire: Frouke Hermens. She will also contribute to the new Master AI.

Face recognition

“I used my student Jinsheng’s pass”

The brand new teacher has a personal experience to tell about facial recognition. “It has been clear to me for some time that a lot is being invested in AI in China. I used to come there. The last time he was in Guangzhou, at Sun-Yat Sen University – a guest at Minghui Ma and also to Yongmei Liu – for access to the university campus, either a scanned student or staff card, or facial recognition, were sufficient to open the entrance doors.

“I used my student Jinsheng’s pass (who was in Amsterdam at the time). I was not in their visual file. So facial recognition didn’t work for me, but I had this pass so I went in. What I don’t know is if facial recognition could determine that I clearly wasn’t the owner of the pass, but it was never an eyebrow raised by security personnel at the campus entrance. .

Communicatieprotocollen

Which applications does Van Ditmarsch prefer to see developed with AI? Communication protocols. I myself have done a lot of recent work on so-called gossip protocols, especially extensions of those protocols with user information (other than extensions with network information, which is common in this area – TM.). This is the dissemination of information in networks. A possible applicability problem is the complexity of the calculations. Not the kind you can overcome with a supercomputer, but only through smarter algorithms.

A gossip protocol is a procedure or process of peer-to-peer communication between computers depending on how epidemics spread. Some distributed systems use such a protocol to ensure that data is distributed to all members of a group.

A lot of attention

According to Van Ditmarsch, students are very interested in AI. “ I was invited to het in 2018 Indian Institute of Technology Mandi in India. Located in the Himalayas, very secluded, and you could walk fantastic. Highly motivated students, I’ve been asked to judge on, say, third year projects, and it has ranged from electronic skateboards to automatic medicine cabinets and everyone wants to do something with ‘deep learning’ ‘ – what I do not know. Nothing like bloated skateboards, by the way. It was a pleasure and a privilege. The interest generated by the OU remains to be seen. In any case, I will start on June 1st.